pedro lopes

Associate Professor at University of Chicago (since 2019).

Lead of the Human Computer Integration Lab in the Department of Computer Science.

Previously: PhD student with Prof. Patrick Baudisch at Hasso Plattner Institute

You can read my PhD thesis here (Best Dissertation Award, University of Potsdam.).

| Google scholar |

CV |

my lab |

press & outreach |

teaching |

|

academic service |

art |

music |

contact |

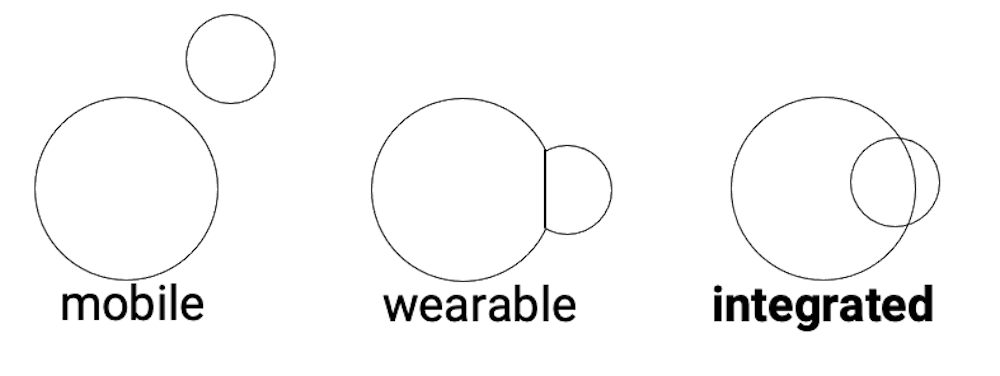

My vision: interactive devices that integrate with the user's body

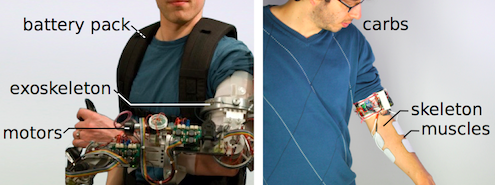

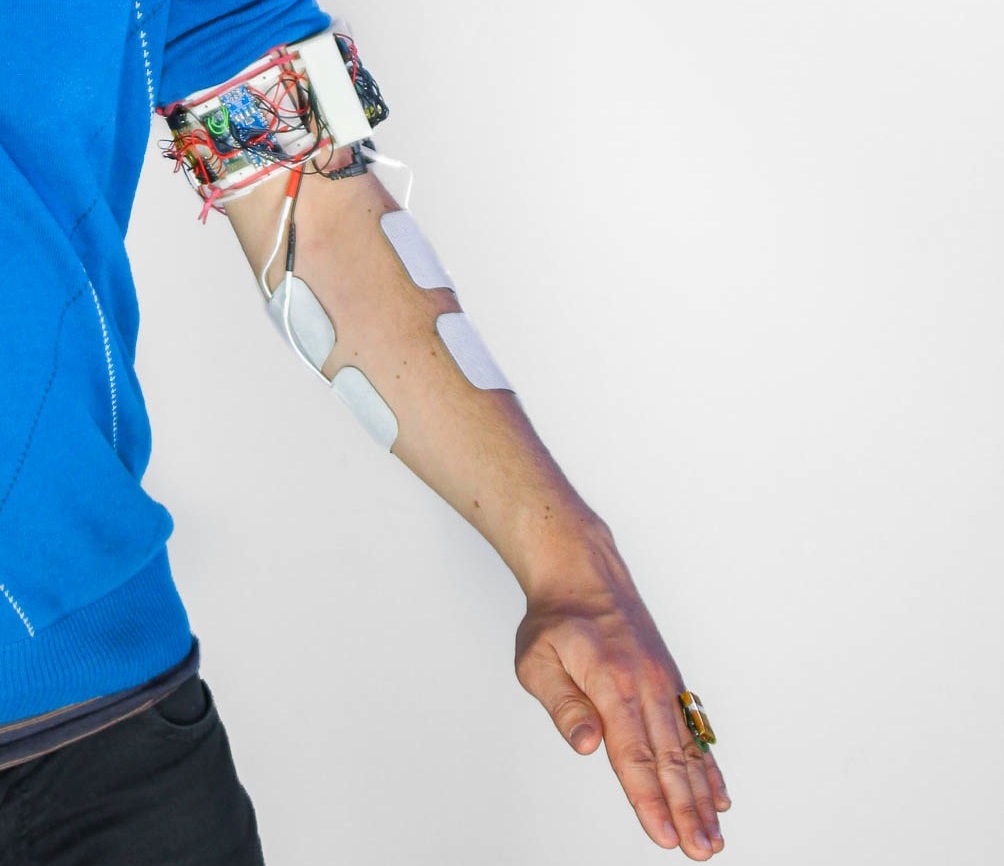

In my lab, we engineer interactive devices that integrate directly with the user’s body. These devices are the natural succession to wearable interfaces and are designed to investigate how interfaces will connect to our body in a more direct and personal way. Previously, in my PhD (thesis), I explored a subset of this concept: interactive devices that interface directly with the user’s muscles. These devices actuate the user's body by means of computer-controlled electrical muscle stimulation (EMS), enabling touch/forces in VR or haptic training without the weight and bulkiness of conventional robotic exoskeletons. These devices gain their advantages, not by adding more technology to the body, but from borrowing parts of the user's body as input/output hardware, resulting in devices that are not only exceptionally small, but that also implement a novel interaction model, in which devices integrate directly with the user's body. Recently, we were able to generalize this concept to new modalities, including novel ways to interface with a user’s sense of temperature, smell and rich-touch sensations.

We think bodily-integrated interactive devices are beneficial as they enable new modes of reasoning with computers, going beyond just symbolic thinking (reasoning by typing and reading language on a screen). While this physical integration between human and computer is beneficial in many ways (e.g., faster reaction time, realistic simulations in VR/AR, faster skill acquisition, etc.), it also requires tackling new challenges, such as improving the precision of muscle stimulation or the question of agency: do we feel in control when our body is integrated with an interface? We explore these questions, together with neuroscientists, by understanding how our brain encodes the feeling of agency to improve the design of this new type of integrated interfaces.

Moreover, we are also exploring how novel computer-interfaces can provide assistance and promote attitude change in critical societal challenges, namely reducing electronic waste; such as by changing our relationship with technology, or by pushing forward e-waste reuse via computationally-driven recycling.

Want to watch even more videos like this? Check our Youtube channel.

For full keynotes you can hear my Seminar at CMU or my Seminar at UW.

Publications (main venues for technical HCI are ACM CHI & UIST; also, if a paper is numbered it is a CHI/UIST paper, otherwise it is a neuro/science journal or another HCI conference.)

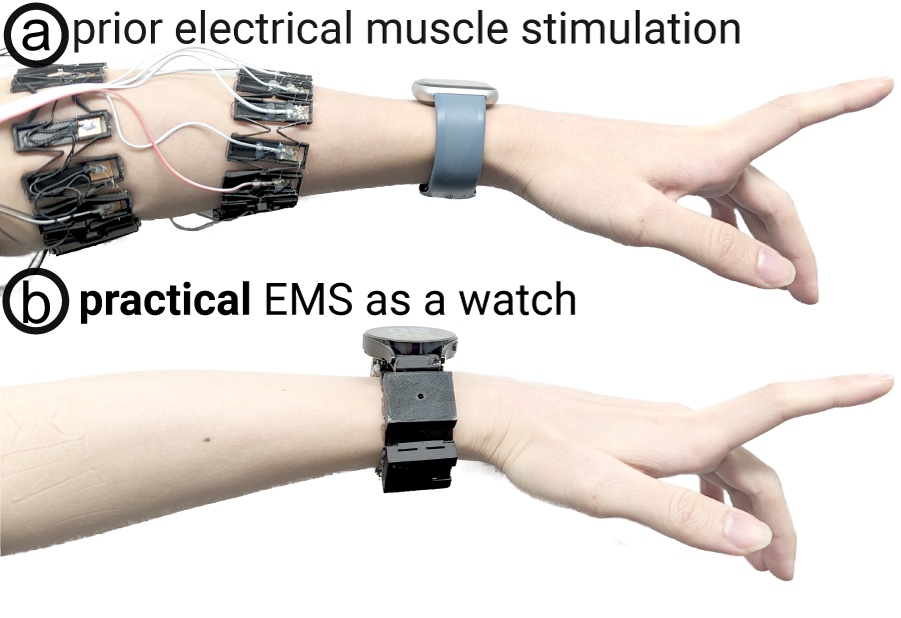

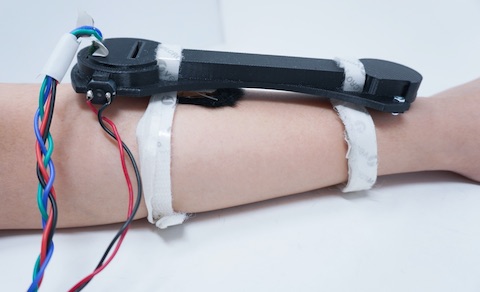

Akifumi Takahashi, Yudai Tanaka, Archit Tamhane, Alan Shen, Shan-Yuan Teng, Pedro Lopes. In Proc. UIST24

Smartwatches gained popularity in the mainstream, making them into today’s de-facto wearables. Despite advancements in sensing, haptics on smartwatches is still restricted to simple vibrations. Most smartwatch-sized actuators cannot render strong force-feedback. Simultaneously, electrical muscle stimulation (EMS) promises compact force-feedback but, to actuate fingers requires users to wear many electrodes on their forearms—detracting EMS from being a practical force-feedback interface. To address this, we propose moving the electrodes to the wrist—conveniently packing them in the backside of a smartwatch. We engineered a compact EMS that integrates directly into a smartwatch’s wristband (with a custom stimulator, electrodes, demultiplexers, and communication).

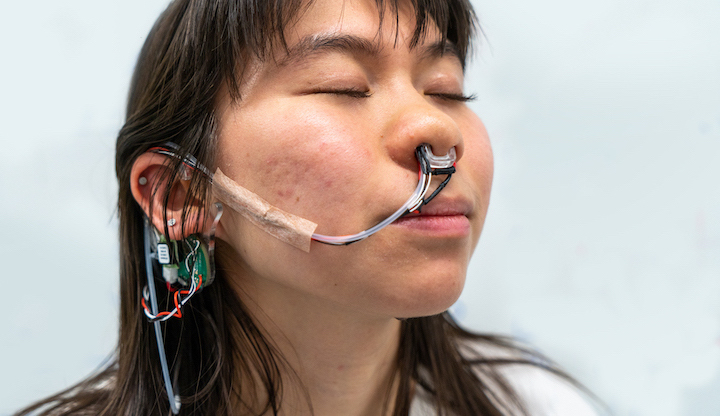

Jas Brooks, Alex Mazursky, Janice Hixon, Pedro Lopes. In Proc. UIST'24

We propose a novel method to augment the feeling of breathing—enabling interactive applications to let users feel like they are inhaling more/less air (perceived nasal airflow). We achieve this effect by cooling or heating the nose in sync with the user’s inhalation. Our illusion builds on the physiology of breathing: we perceive our breath predominantly through the cooling of our nasal cavities during inhalation. This work closes the I/O loop on breathing, which was previously only a input modality, but now can be used also for output (e.g., in VR, meditation, mask relief, etc).

Yudai Tanaka, Jacob Serfaty, Pedro Lopes. In Proc. CHI'24

CHI best paper award (1%)

UIST honorable mention for best demo award (jury's choice)

We propose a novel concept for haptics in which one centralized on-body actuator renders haptic effects on multiple body parts by stimulating the brain, i.e., the source of the nervous system—we call this a haptic source-effector, as opposed to the traditional wearables’ approach of attaching one actuator per body part (end-effectors). We implement our concept via transcranial-magnetic-stimulation. Our approach renders ∼15 touch/force-feedback sensations throughout the body (e.g., hands, arms, legs, feet, and jaw—which we found in our user study), all by stimulating the user’s sensorimotor cortex with a magnetic coil moved mechanically across the scalp. While this was presented as a paper at CHI24, it was also shown as a demo at UIST24.

Romain Nith, Yun Ho, Pedro Lopes. In Proc. CHI'24

CHI best paper award (1%)

Electrical muscle stimulation (EMS) offers promise in assisting physical tasks by automating movements (e.g., shake a spray can that the user is using). However, these systems improve the performance of a task that users are already focusing on (e.g., users are already focused the spray can). Instead, we investigate whether these muscle stimulation offer benefits when they automate a task that happens in the background of the user’s focus. We found that automating a repetitive movement via EMS can reduce mental workload while users perform parallel tasks (e.g., focusing on writing an essay while EMS stirs a pot of soup).

Shan-Yuan Teng, Aryan Gupta, Pedro Lopes. In Proc. CHI'24

To minimize encumberment of tactile devices for the fingerpads, researchers moved away from thick actuators (e.g., vibration motors) and, instead, focused on thin electrotactile stimulation. However, we argue just making thin electrodes is not enough and on should also balance how much a tactile device impairs feeling the real world vs. how accurately it delivers virtual sensations. Thus, we propose adding holes to electrotactile devices, which results in: (1) improved tactile perception; and (2) improved force control in grasping. Our approach significantly improves the haptic users’ abilities in dexterous activities, including manipulating tools, in mixed reality.

Alex Mazursky, Jacob Serfaty, Pedro Lopes. In Proc. CHI'24

We present Stick&Slip, a novel approach that alters friction between the fingerpad & surfaces by depositing liquid droplets that coat the fingerpad—this liquid modifies the finger’s coefficient of friction, ±60% more slippery or sticky. We selected fluids to rapidly evaporate so that the surface returns to its original friction. Unlike traditional friction-feedback, such as electroadhesion or vibration, our approach: (1) alters friction on a wide range of surfaces and geometries, making it possible to modulate nearly any non-absorbent surface; (2) scales to many objects without requiring instrumenting the target surfaces (e.g., with conductive electrode coatings or vibromotors); and (3) both in/decreases friction via a single device.

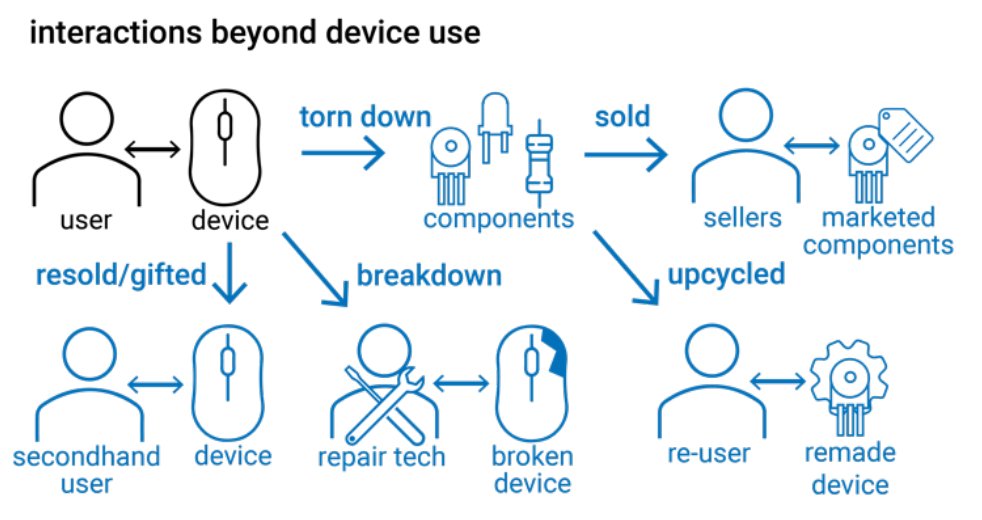

Jasmine Lu, Pedro Lopes. In Proc. TOCHI'24

E-waste is the largest-growing consumer waste-stream worldwide, but also an issue often ignored. In fact, HCI primarily focuses on designing and understanding interactions during only one segment of a device's lifecycle—while users use them. Researchers overlook a significant space—when devices are no longer “useful” (e.g., breakdown or obsolescence). We argue that HCI can learn from experts who upcycle e-waste for electronics projects, art, and more. To acquire this knowledge, we interviewed experts who unmake e-waste. We explore their practices through the lens of unmaking both when devices are physically unmade and when the perception of e-waste is unmade once waste becomes, once again, useful.

Yuhan Hu, Jasmine Lu, Nathan Scinto-Madonich, Miguel Alfonso Pineros, Pedro Lopes, Guy Hoffman. In Proc. DIS'24

By proposing plant-driven actuators (instead of traditional mechanical motors) we explore a new type of device that grows, ages, and decays. These actuators allow interactive devices, such as robots, to embody these organic qualities in their physical structure. Plant actuators that grow and decay incorporate unpredictable and gradual transformations inherent in living organisms and stand an alternative to the typical design principles of immediacy, responsiveness, control, accuracy, and durability commonly found in robotic design. This work was a collaboration led by Guy Hoffman and his team at Cornell.

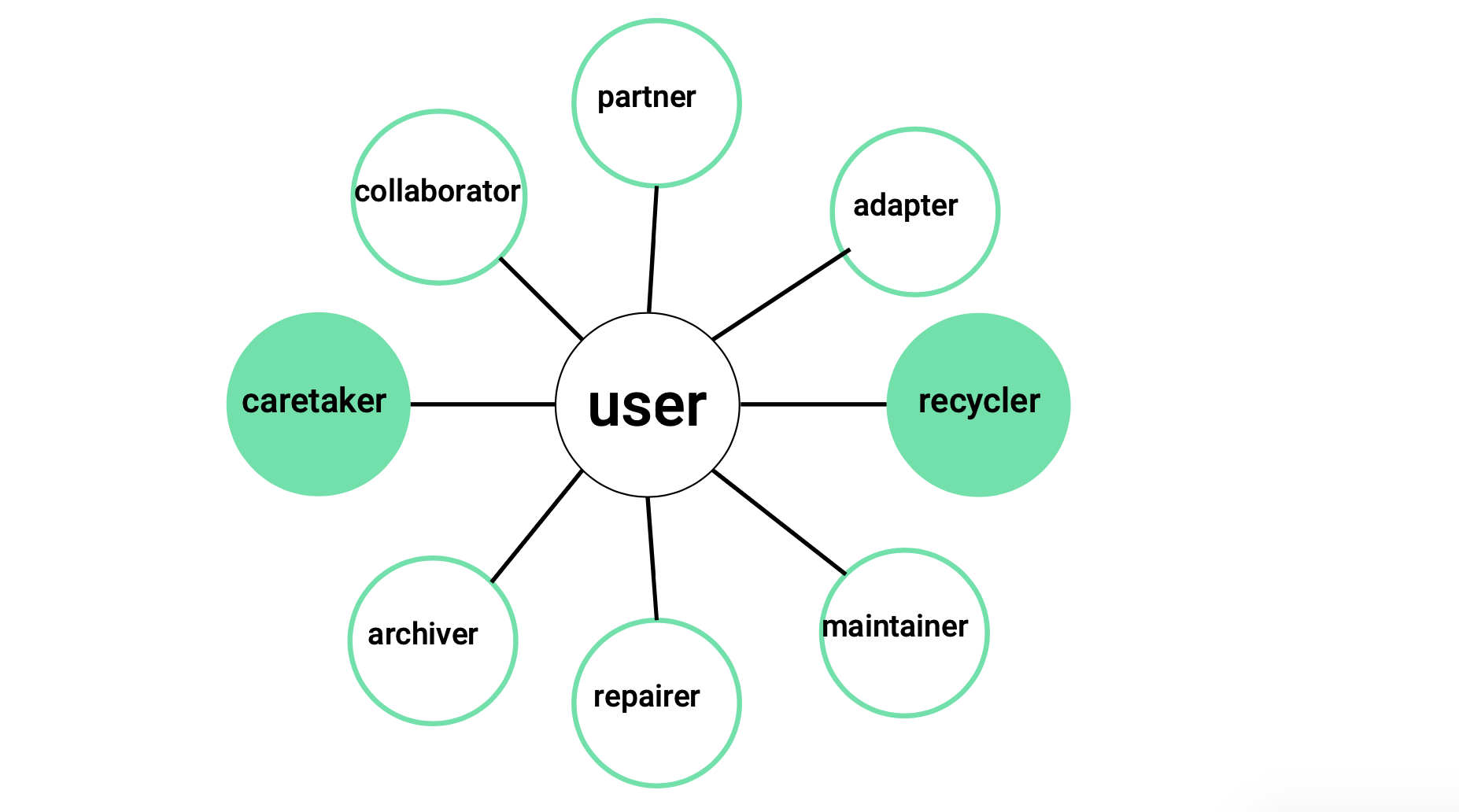

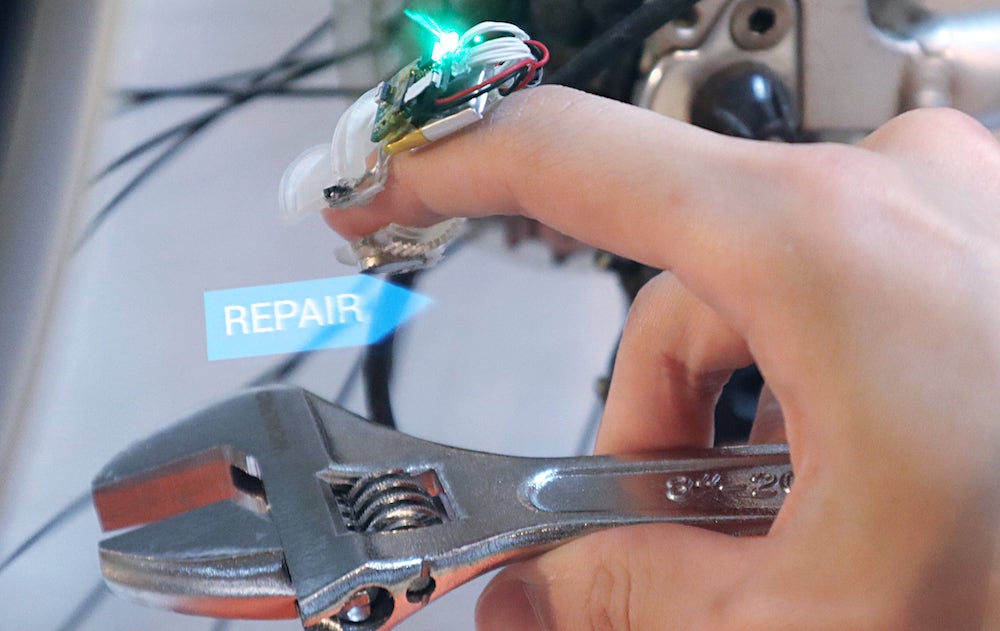

Jasmine Lu, Pedro Lopes. In Proc. IEEE Pervasive Computing'24

In this thought-piece we argue that there are other roles we can take with regards to our technology; essentially, we argue people can be more than just users. To us this is important because as computing becomes pervasive in our lives, more and more e-waste is generated. Exploring new roles for users is thus essential for transitioning toward a more sustainable future in computing. To this end, we argue that the envisioned roles we attribute to users during user-centered design should encompass much more—we can design for user roles such as maintainers, repairers, or even recyclers of interactive devices.

Alex Mazursky, Jas Brooks, Beza Desta, Pedro Lopes. In Proc. IEEE VR'24

Thermal interfaces typically attach Peltiers, heatsinks and fans directly to the palm or sole, preventing users from grasping or walking. Instead, we present ThermalGrasp, an engineering approach for wearable thermal interfaces that enables users to grab and walk on real objects with minimal obstruction. ThermalGrasp moves Peltiers/cooling to areas not used in grasping or walking (e.g., dorsal hand/foot). We then use thin, compliant materials to conduct heat to/from the palm or sole. Using this approach, a user can, for example, grasp a passive prop (e.g., a stick that acts as a torch in VR), yet feel its thermal state (e.g., hot due to its flame).

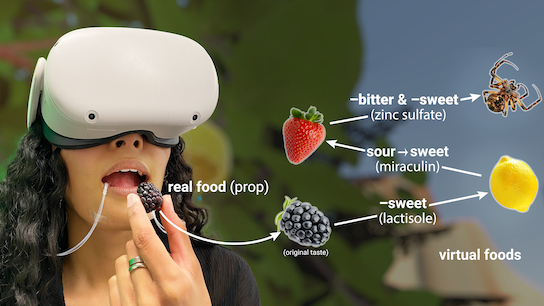

Jas Brooks, Noor Amin, Pedro Lopes. In Proc. UIST'23 (full paper)

UIST Honorable mention award for Demo (Jury's choice)

Taste retargeting selectively changes taste perception using taste modulators—chemicals that temporarily alter the response of taste receptors to foods and beverages. As our technique can deliver droplets of modulators before eating or drinking, it is the first interactive method to selectively alter the basic tastes of real foods without obstructing eating or impacting the food’s consistency. It can be used, for instance, to enable a single food prop to stand in for many virtual foods. For instance, it can transform a pickled blackberry in: a lemon (by decreasing sweetness with lactisole), a strawberry (by transforming sour to sweetness with miraculin), and much more.

Jasmine Lu, Beza Desta, K.D. Wu, Romain Nith, Joyce Passananti, Pedro Lopes. In Proc. UIST'23 (full paper)

UIST Honorable mention award

The amount of e-waste generated by discarding devices is enormous. However, inside a discarded device you can find dozens to thousands of reusable components (e.g., microcontrollers, sensors, etc). Despite this, existing electronic design tools assume users will buy all components anew. To tackle this, we propose ecoEDA, an interactive tool that enables electronics designers to explore recycling electronic components during the design process. It accomplishes this by giving suggestions of which components to recycle based on a library of the user's own devices (discarded, found, broken, etc).

Yudai Tanaka, Akifumi Takahashi, Pedro Lopes. In Proc. UIST'23 (full paper)

After 10 years of working on electrical muscle stimulation, we wanted to take a introspective and attempt to uncover any benefits gained by switching from electrical (EMS) to magnetic muscle stimulation (MMS). While much ink has been spilled about the advantages of EMS, not much work has investigated circumventing its key limitations: electrical impulses also cause an uncomfortable "tingling" sensation; EMS relies on pre-gelled electrodes, which require direct contact with the user’s skin, and dry up quickly. To tackle these limitations, we study force-feedback based on magnetic muscle stimulation, which we found to reduce uncomfortable tingling and enable stimulation over the clothes.

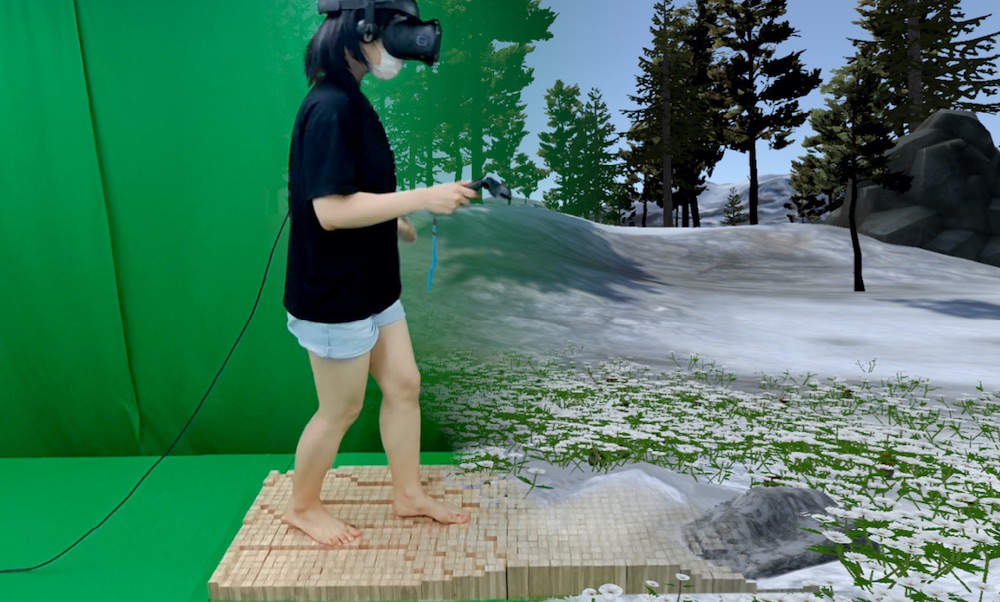

Keigo Ushiyama, Pedro Lopes. In Proc. UIST'23 (full paper)

Most haptic interfaces for the feet use mechanical actuators (e.g., vibration motors), which we argue are not the ideal actuators for the job. Instead, we show that electrotactile stimulation is powerful feet-haptic interface: (1) users feel not only the stimulation but also the terrain under their feet—critical to safely balance on uneven terrains;(2) while a single vibrotactile actuator will also vibrate surrounding skin areas, electrotactile feels more localized; (3) can be applied directly to soles, insoles or socks, enabling new applications such as barefoot interactive experiences (e.g., yoga posture correction) or without the need for special shoes (e.g., guidance for low-vision users).

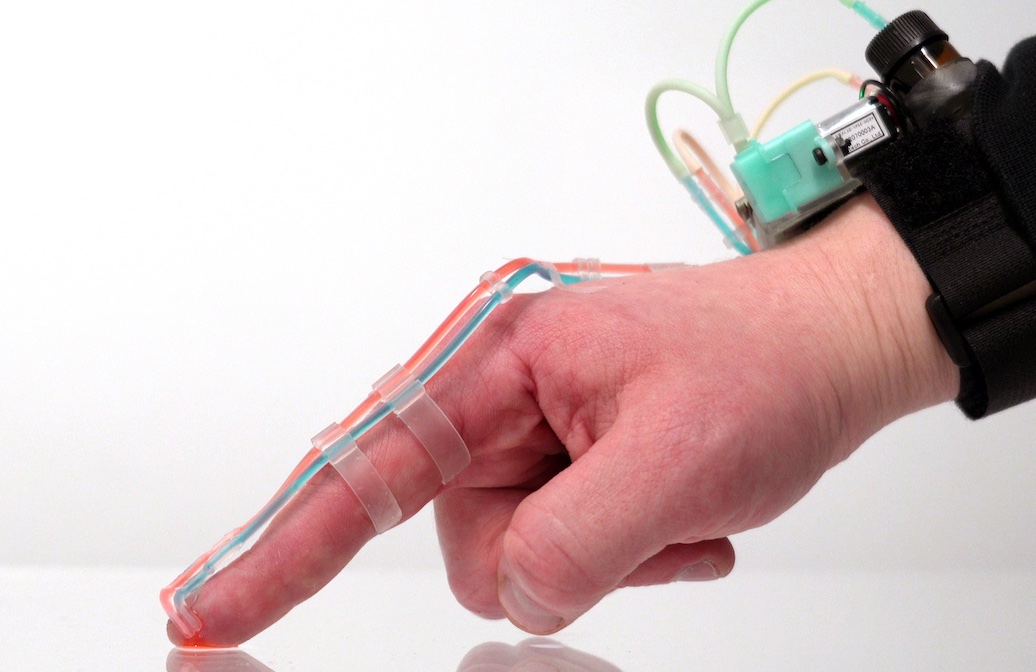

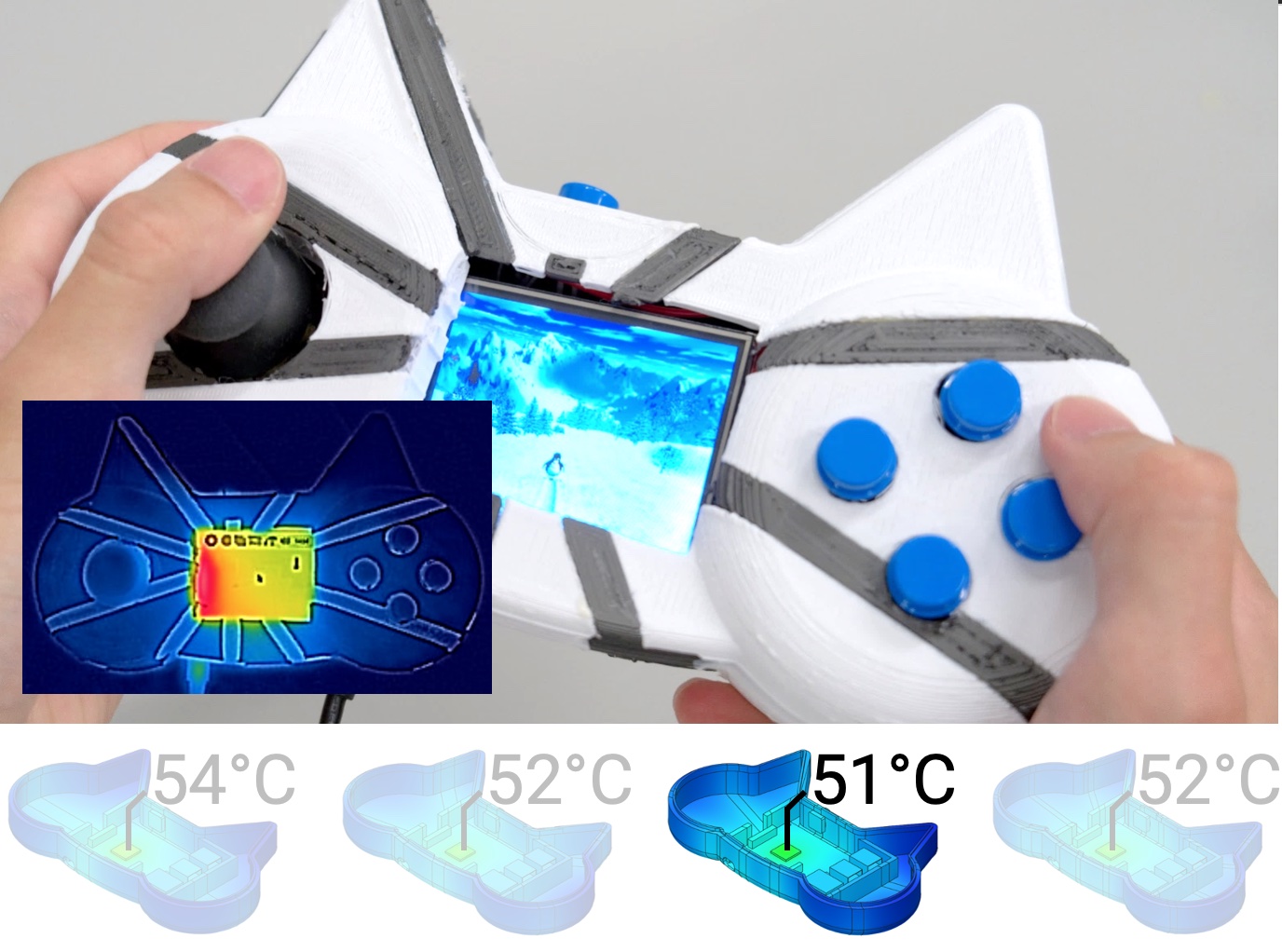

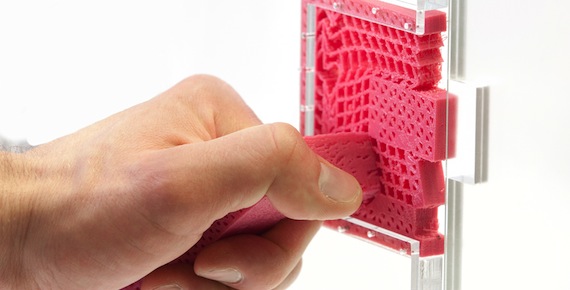

Alex Mazursky, Borui Li, Shan-Yuan Teng, Daria Shifrina, Joyce E. Passananti, Svitlana Midianko, Pedro Lopes. In Proc. UIST'23 (full paper)

Users often 3D model enclosures that interact with heat sources (e.g., CPU, motor, lamps, etc.). While parts made by novices might function well aesthetically or structurally, they are rarely thermally-sound. This happens because heat transfer is non-intuitive. To tackle this, we developed ThermalRouter, a CAD plugin that assists with improving the thermal performance of their models. ThermalRouter automatically converts regions of the model to be made from thermally-conductive materials (such as nylon or metallic-silicone). These regions act as heat channels, branching away from hotspots to dissipate heat. The key is that ThermalRouter automatically simulates the thermal performance of many possible heat channel configurations and presents the user with the most thermally-sound design (e.g., lowest temperature).

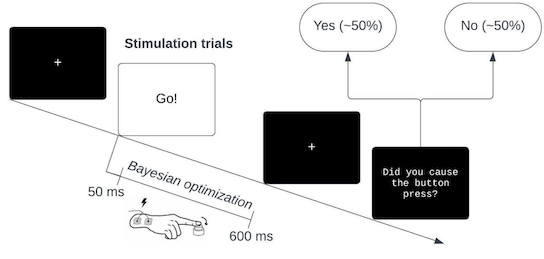

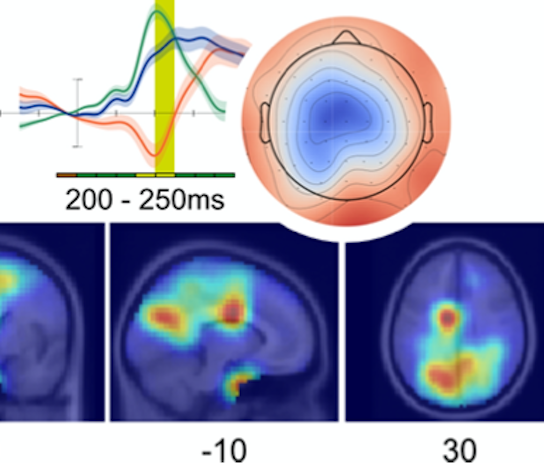

John P. Veillette, Pedro Lopes, Howard C. Nusbaum. In Proc. Journal of Neuroscience'23 (full paper)

We investigate the time course of neural activity that predicts the sense of agency over electrically actuated movements. We find evidence of two distinct neural processes–a transient sequence of patterns that begins in the early sensorineural response to muscle stimulation and a later, sustained signature of agency. This work is part of our lab's exploration on how future interactive devices need be designed to prioritize the user's sense of agency, read more at our project's page (we have six other papers on this topic).

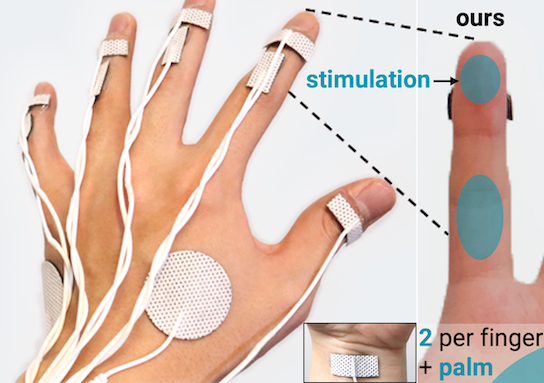

Yudai Tanaka, Alan Shen, Andy Kong, Pedro Lopes. In Proc. CHI'23 (full paper)

CHI best paper award (1%)

This technique renders tactile feedback to the palmar side of the hand while keeping it unobstructed and, thus, preserving manual dexterity. We implement this by applying electro-tactile stimulation without any electrodes on the palmar side, yet that is where tactile sensations are felt. This creates tactile sensations on 11 diferent places of the palm and fingers while keeping the palms free for dexterous manipulations (e.g., VR with props, AR with tools and more).

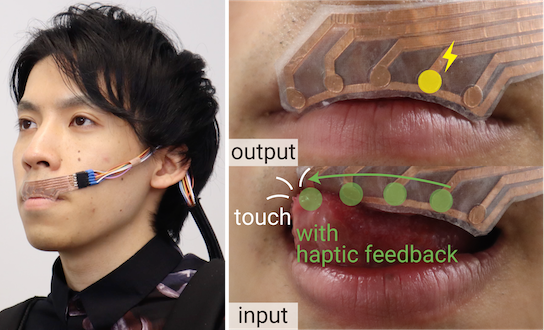

Arata Jingu, Yudai Tanaka, Pedro Lopes. In Proc. CHI'23 (full paper)

LipIO enables the lips to be used simultaneously as an input and output surface. It is made from two overlapping fexible electrode arrays: an outward-facing array for capacitive touch and a lip-facing array for electrotactile stimulation. While wearing LipIO, users feel the interface's state via lip stimulation and respond by touching their lip with their tongue or opposing lip. More importantly, LipIO provides co-located tactile feedback that allows users to feel where in the lip they are touching—this is key to enabling eyes- and hands-free interactions. We tend to think of LipIO as an extreme user interface for situations in which the user's eyes, hands and even ears are already occupied with a primary task. We show, for instance, how a user could make use of LipIO to control an interface while biking or while playing guitar.

Romain Nith, Jacob Serfaty, Samuel Shatzkin, Alan Shen, Pedro Lopes. In Proc. CHI'23 (full paper)

To enable interactive experiences to feature jump-based haptics without sacrificing wearability, we propose JumpMod, an untethered backpack that modifies one's sense of jumping. JumpMod achieves this by moving a weight up/down along the user's back, which modifies perceived jump momentum—creating accelerated and decelerated jump sensations. In our second study, we empirically found thatour device can render five effects: jump higher, land harder/softer, pulled higher/lower. Based on these, we designed four jumping experiences for VR and sports. Finally, in our third study, we found that participants preferred wearing our device in an interactive context, such as one of our jump-based VR applications.

Jas Brooks, Pedro Lopes. In Proc. CHI'23 (full paper)

Low-fidelity prototyping is so foundational to Human-Computer Interaction, appearing in most early design phases. So, how do experts prototype olfactory experiences? We interviewed eight experts and found that they do not because no process supports this. Thus, we engineered Smell & Paste, a low-fidelity prototyping toolkit. Designers assemble olfactory proofs-of-concept by pasting scratch-and-sniff stickers onto a paper tape. Then, they test the interaction by advancing the tape in our 3D-printed (or cardboard) cassette, which releases the smells via scratching.

Yiran Zhao, Yujie Tao, Grace Le, Rui Maki, Alexander Adams, Pedro Lopes, and Tanzee Choudhury. In Proc. UbiComp'23 (IMWUT) (full paper)

We investigated affective touch as a new pathway to passively mitigate in-the-moment anxiety. While existing mobile interventions offer great promises for health and well-being, they typically focus on achieving long-term effects such as shifting behaviors—thus, not applicable to give immediate help, e.g., when a user experiences a high anxiety level. To this end, we engineered a wearable device that renders a soft stroking sensation on the user's forearm. Our results showed that participants who received affective touch experienced lower state anxiety and the same physiological stress response level compared to the control group participants. This work was a collaboration led by Tanzeem Choudhury and her team at Cornell.

Jasmine Lu and Pedro Lopes. In Proc. UIST'22

We explore how embedding a living organism, as a functional component of a device, changes the user-device relationship. In our concept, the user is responsible for providing an environment that the organism can thrive in by caring for the organism. We instantiated this concept as a slime mold integrated smartwatch. The slime mold grows to form an electrical wire that enables a heart rate sensor. The availability of the sensing depends on the slime mold’s growth, which the user encourages through care. If the user does not care for the slime mold, it enters a dormant stage and is not conductive. The users can resuscitate it by resuming care.

UIST'22 honorable mention award

Shan-Yuan Teng, K. D. Wu, Jacqueline Chen, Pedro Lopes. In Proc. UIST'22

We propose a new technical approach to implement VR haptic devices that contain no battery, yet can render on-demand haptic feedback. Our haptic device charges by harvesting the user’s kinetic energy (i.e., movement)—even without the user needing to realize this. Whenever our batteryless haptic device is about to lose power, it switches to harvesting mode (by engaging its clutch to a generator) and, simultaneously, the VR headset renders an alternative version of the current experience that depicts resistive forces (e.g., rowing a boat in VR). As a result, the user feels realistic haptics that corresponds to what they should be feeling in VR, while unknowingly charging the device via their movements. The VR can now use the harvested power for on-demand haptics, including vibration or force-feedback; this process can be repeated, ad infinitum.

Yujie Tao, Pedro Lopes. In Proc. UIST'22

We explore a new concept, where we directly integrate the distracting stimuli from the user’s physical surroundings into their virtual reality experience to enhance presence. Using our approach, an otherwise distracting wind gust can be directly mapped to the sway of trees in a VR experience that already contains trees. Using our novel approach, we demonstrate how to integrate a range of distractive stimuli into the VR experience, such as haptics (temperature, vibrations, touch), sounds, and smells. From the results of three studies, we engineered a sensing module that detects a set of distractive signals during any VR experience (e.g., sounds, winds, and temperature shifts) and responds by trigerring pre-made VR sequences that will feel realistic to the user.

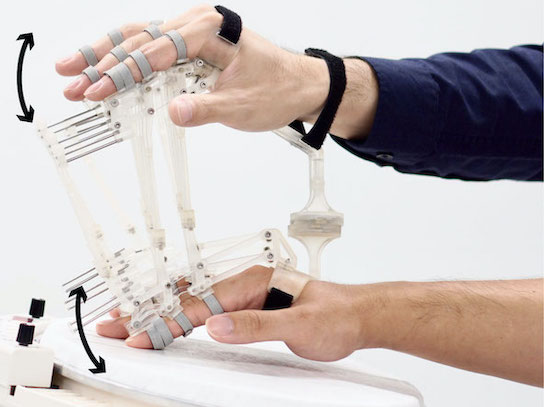

Jun Nishida, Yudai Tanaka, Romain Nith, Pedro Lopes. In Proc. UIST'22

We engineered DigituSync, a passive-exoskeleton that physically links two hands together, enabling two users to adaptively transmit finger movements in real-time. It uses multiple four-bar linkages to transfer both motion and force, while still preserving congruent haptic feedback. Moreover, we implemented a variable-length linkage that allows adjusting the force transmission ratio between the two users and regulates the amount of intervention, which enables users to customize their learning experience. DigituSync’s benefits emerge from its passive design: unlike existing haptic devices (motor-based exoskeletons or electrical muscle stimulation), DigituSync has virtually no latency and does not require batteries/electronics to transmit or adjust movements, making it useful and safe to deploy in many settings, such as between students and teachers in a classroom.

CHI'22 best demo award (people's choice)

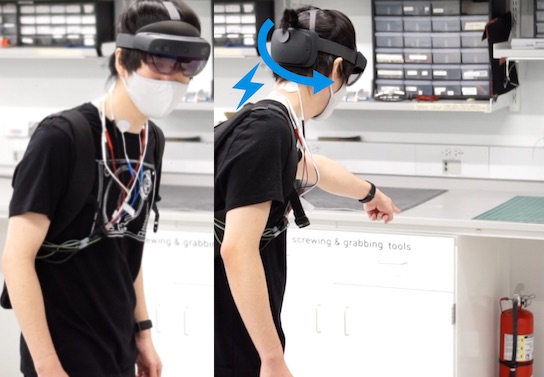

Yudai Tanaka, Jun Nishida and Pedro Lopes. In Proc. CHI’22.

We propose a novel interface concept in which interactive systems directly manipulate the user’s head orientation. We implement this using electrical muscle stimulation (EMS) of the neck muscles, which turns the head around its yaw (left/right) and pitch (up/down) axis. As the first exploration of EMS for head actuation, we characterized which muscles can be robustly actuated and demonstrated how it enables interactions not possible before by building a range of applications, such as (1) synchronizing head orientations of two users, which enables a user to communicate head nods to another user while listening to music, and (2) directly changing the user’s head orientation to locate objects in augmented reality.

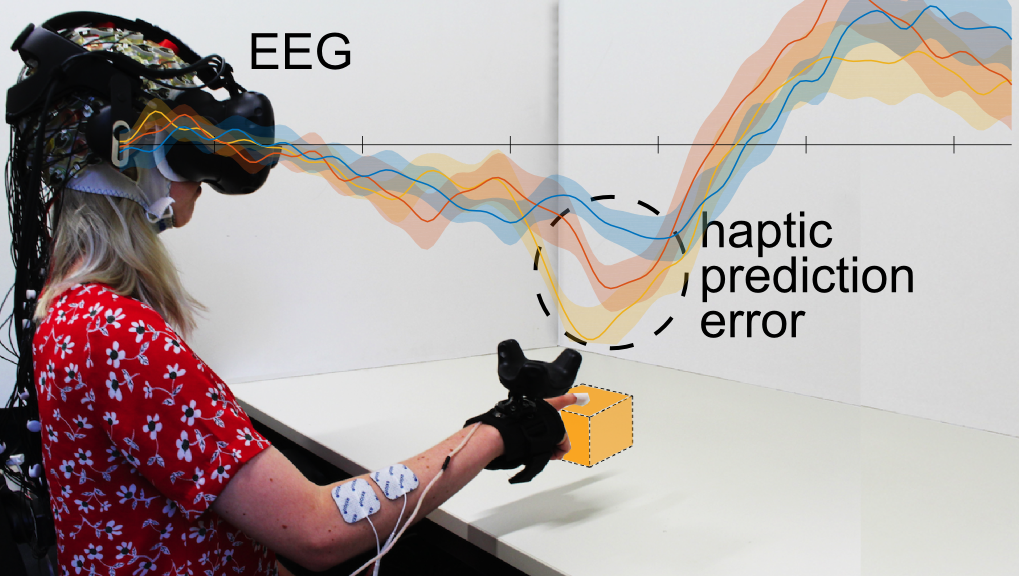

Lukas Gehrke, Pedro Lopes, Marius Klug, Sezen Akman and Klaus Gramann. In Proc. Journal of Neural Engineering 2022.

In VR, designing immersion is one key challenge. Subjective questionnaires are the established metrics to assess the effectiveness of immersive VR simulations. However, administering questionnaires requires breaking the immersive experience they are supposed to assess. We present a complimentary metric based on a ERPs. For the metric to be robust, the neural signal employed must be reliable. Hence, it is beneficial to target the neural signal's cortical origin directly, efficiently separating signal from noise. To test this new complementary metric, we designed a reach-to-tap paradigm in VR to probe EEG and movement adaptation to visuo-haptic glitches. Our working hypothesis was, that these glitches, or violations of the predicted action outcome, may indicate a disrupted user experience. Using prediction error negativity features, we classified VR glitches with ~77% accuracy. This work was a collaboration led by Klaus Gramann and his team at the Neuroscience Department at the TU Berlin.

Jasmine Lu, Ziwei Liu, Jas Brooks, Pedro Lopes. In Proc. UIST’21.

We propose a new class of haptic devices that provide haptic sensations by delivering liquid-stimulants to the user’s skin; we call this chemical haptics. Upon absorbing these stimulants, which contain safe and small doses of key active ingredients, receptors in the user’s skin are chemically triggered, rendering distinct haptic sensations. We identified five chemicals that can render lasting haptic sensations: tingling (sanshool), numbing (lidocaine), stinging (cinnamaldehyde), warming (capsaicin), and cooling (menthol). To enable the application of our novel approach in a variety of settings (such as VR), we engineered a self-contained wearable that can be worn anywhere on the user’s skin (e.g., face, arms, legs).

Romain Nith, Shan-Yuan Teng, Pengyu Li, Yujie Tao, and Pedro Lopes, In Proc. UIST’21.

DextrEMS is a haptic device designed to improve the dexterity of electrical muscle stimulation (EMS). It achieves this by combining EMS with a mechanical brake on all finger joints. These brakes allow us to solve two fundamental problems with current EMS devices: lack of independent actuation (i.e., when a target finger is actuated via EMS, it also often causes unwanted movements in other fingers); and unwanted oscillations (i.e., to stop a finger, EMS needs to continuously contract the opposing muscle). Using its brakes, dextrEMS achieves unprecedented dexterity, in both EMS finger flexion and extension, enabling applications not possible with existing EMS-based interactive devices, such as: actuating the user’s fingers to pose simple letters in sign language, precise VR force-feedback or even playing the guitar.

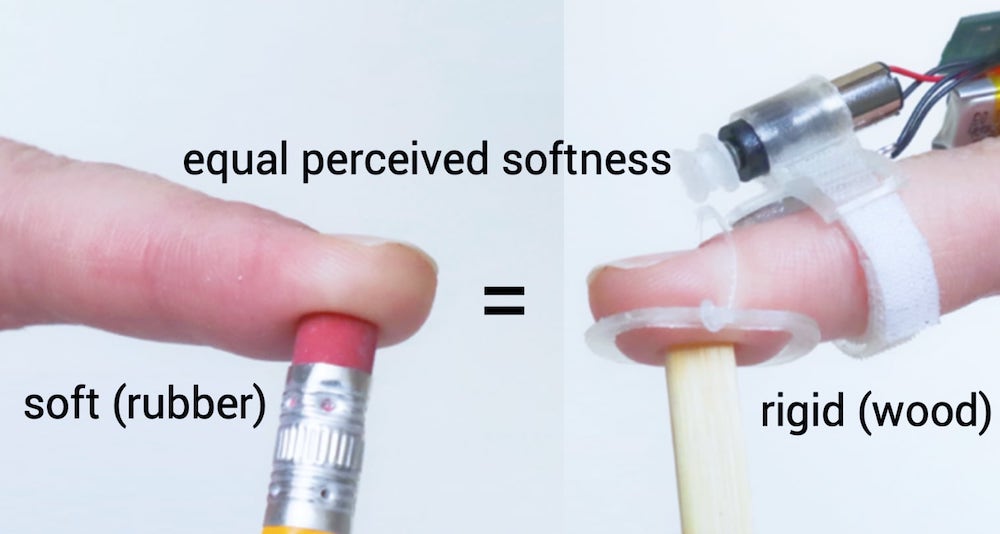

Yujie Tao, Shan-Yuan Teng, Pedro Lopes. In Proc. UIST’21.

We proposed a wearable haptic device that alters perceived softness of everyday objects without instrumenting the object itself. Simulteneously, our device achieves this softness illusion while leaving most of the user's fingerpad free, allowing users to feel the texture of the object they touch. We demonstrate the robustness of this haptic illusion alongside various interactive applications, such as making the same VR prop display different softness states or allowing a rigid 3D printed button to feel soft, like a real rubber button.

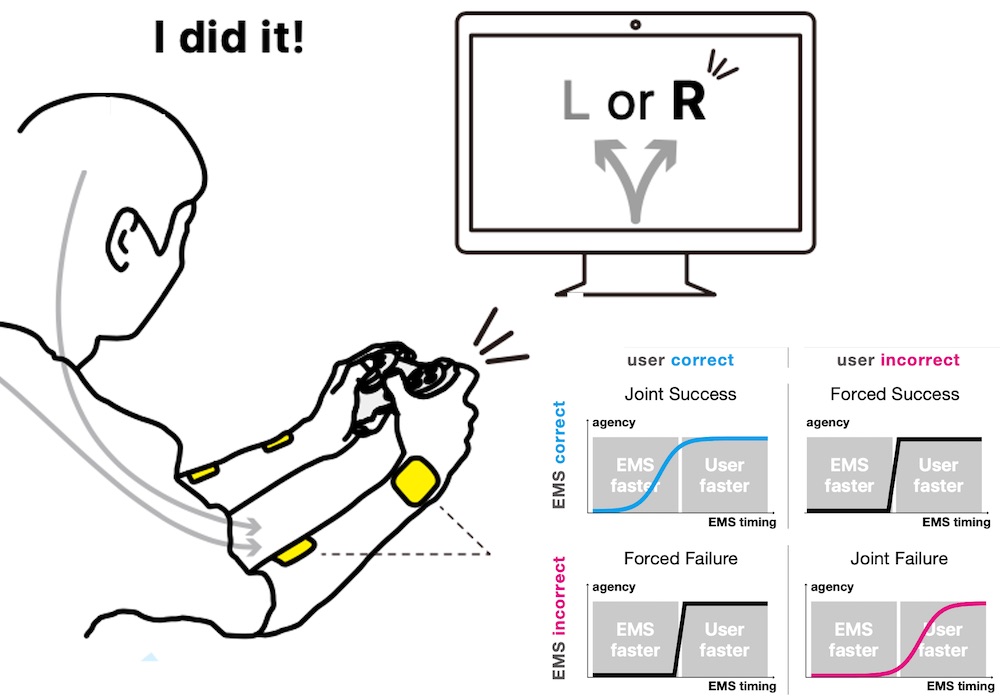

Daisuke Tajima, Jun Nishida, Pedro Lopes and Shunichi Kasahara. In Proc. TOCHI’22.

Force-feedback interfaces actuate the user's to touch involuntarily (using exoskeletons or electrical muscle stimulation); we refer to this as computer-driven touch. Unfortunately, forcing users to touch causes a loss of their sense of agency. While we found that delaying the timing of computer-driven touch preserves agency, they only considered the naive case when user-driven touch is aligned with computer-driven touch. We argue this is unlikely as it assumes we can perfectly predict user-touches. But, what about all the remainder situations: when the haptics forces the user into an outcome they did not intend or assists the user in an outcome they would not achieve alone? We unveil, via an experiment, what happens in these novel situations. From our findings, we synthesize a framework that enables researchers of digital-touch systems to trade-off between haptic-assistance vs. sense-of-agency. Read more at our project's page (we have five other papers on this topic).

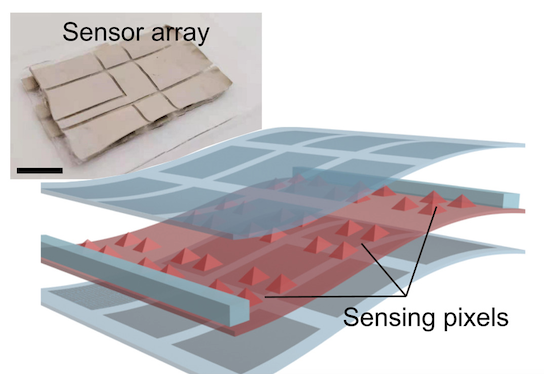

Qi Su, Q. Zou, Yang Li, Yuzhen Chen, Shan-Yuan. Teng, Jane Tunde Kelleher, Romain Nith, Ping Cheng, Nan Li, Wei Liu, Shilei Dai, Youdi Liu, Alex Mazursky, Jie Xu, Lihua Jin, Pedro Lopes, Sihong Wang. In Science Advances

Existing types of stretchable pressure sensors have an inherent limitation of the interference of the stretching to the pressure sensing accuracy. We present a new design concept for a highly stretchable and highly sensitive pressure sensor that can provide unaltered sensing performance under stretching, which is realized through the creation of locally and biaxially stiffened micro-pyramids made from an ionic elastomer. This work was a collaboration and led by Sihong Wang and his team at the Molecular Engineering Department at the University of Chicago.

Akifumi Takahashi, Jas Brooks, Hiroyuki Kajimoto, Pedro Lopes. In Proc. CHI'21

CHI'21 best paper award, top 1% and CHI'21 best demo award (audience's choice)

We improved the dexterity of the finger flexion produced by interactive devices based on electrical muscle stimulation (EMS). The key to achieve it is that we discovered a new electrode layout in the back of the hand. Instead of the existing EMS electrode placement, which flexes the fingers via the flexor muscles in the forearm, we stimulate the interossei/lumbricals muscles in the palm. Our technique allows EMS to achieve greater dexterity around the metacarpophalangeal joints (MCP), which we demonstrate in a series of applications, such as playing individual piano notes, doing a a two-stroke drum roll or barred guitar frets. These examples were previously impossible with existing EMS electrode layouts.

Jas Brooks, Shan-Yuan Teng, Jingxuan Wen, Romain Nith, Jun Nishida, Pedro Lopes. In Proc. CHI'21

We engineered a device that creates a stereo-smell experience, i.e., directional information about the location of an odor, by rendering the readings of external odor sensors as trigeminal sensations using electrical stimulation of the user’s nasal septum. The key is that the sensations from the trigeminal nerve, which arise from nerve-endings in the nose, are perceptually fused with those of the olfactory bulb (the brain region that senses smells). We use this sensation to then allow participants to track a virtual smell source in a room without any previous training.

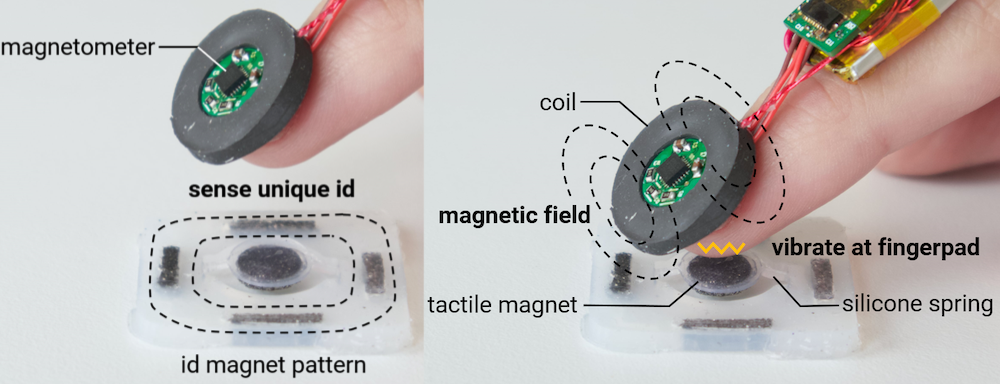

Alex Mazursky, Shan-Yuan Teng, Romain Nith, Pedro Lopes. In Proc. CHI'21 and SIGGRAPH'21 eTech.

We propose a new type of haptic actuator, which we call MagnetIO, that is comprised of two parts: any number of soft interactive patches that can be applied anywhere and one battery-powered voice-coil worn on the user’s fingernail. When the fingernail-worn device contacts any of the interactive patches it detects its magnetic signature and makes the patch vibrate. To allow these otherwise passive patches to vibrate, we make them from silicone with regions doped with neodymium powder, resulting in soft and stretchable magnets. This novel decoupling of traditional vibration motors allows users to add interactive patches to their surroundings by attaching them to walls, objects or even other devices or appliances without instrumenting the object with electronics.

Shan-Yuan Teng, Pengyu Li, Romain Nith, Jersey Fonseca,Pedro Lopes. In Proc. CHI'21 and SIGGRAPH'21 eTech.

CHI'21 honorable mention award, top 5%

We propose a nail-mounted foldable haptic device that provides tactile feedback to mixed reality (MR) environments by pressing against the user’s fingerpad when a user touches a virtual object. What is novel in our device is that it quickly tucks away when the user interacts with real-world objects. Its design allows it to fold back on top of the user’s nail when not in use, keeping the user’s fingerpad free to, for instance, manipulate handheld tools and other objects while in MR. To achieve this, we engineered a wireless and self-contained haptic device, which measures 24×24×41 mm and weighs 9.5 g. Furthermore, our foldable end-effector also features a linear resonant actuator, allowing it to render not only touch contacts (i.e., pressure) but also textures (i.e., vibrations).

Yuxin Chen, Zhuolin Yang, Ruben Abbou, Pedro Lopes, Ben Zhao, Heather Zheng. In Proc. CHI'21.

We propose a novel modality for active biometric authentication: electrical muscle stimulation (EMS). To explore this, we engineered ElectricAuth, a wearable that stimulates the user’s forearm muscles with a sequence of electrical impulses (i.e., EMS challenge) and measures the user’s involuntary finger movements (i.e., response to the challenge). ElectricAuth leverages EMS’s intersubject variability, where the same electrical stimulation results in different movements in different users because everybody’s physiology is unique (e.g., differences in bone and muscular structure, skin resistance and composition, etc.). As such, ElectricAuth allows users to login without memorizing passwords or PINs. This is work was a colaboration led by Heather Zheng (SAND Lab).

Seungwoo Je, Hyunseung Lim, Kongpyung Moon, Shan-Yuan Teng, Jas Brooks, Pedro Lopes, Andrea Bianchi. In Proc. CHI'21.

Existing shape-changing floors are limited by their tabletop scale or the coarse resolution of the terrains they can display due to the limited number of actuators and low vertical resolution. To tackle this, we engineered Elevate, a dynamic and walkable pin-array floor on which users can experience not only large variations in shapes but also the details of the underlying terrain. Our system achieves this by packing 1200 pins arranged on a 1.80 x 0.60m platform, in which each pin can be actuated to one of ten height levels (resolution: 15mm/level). This work was a collaboration and was led by Andrea Bianchi, who runs the MAKinteract group at KAIST.

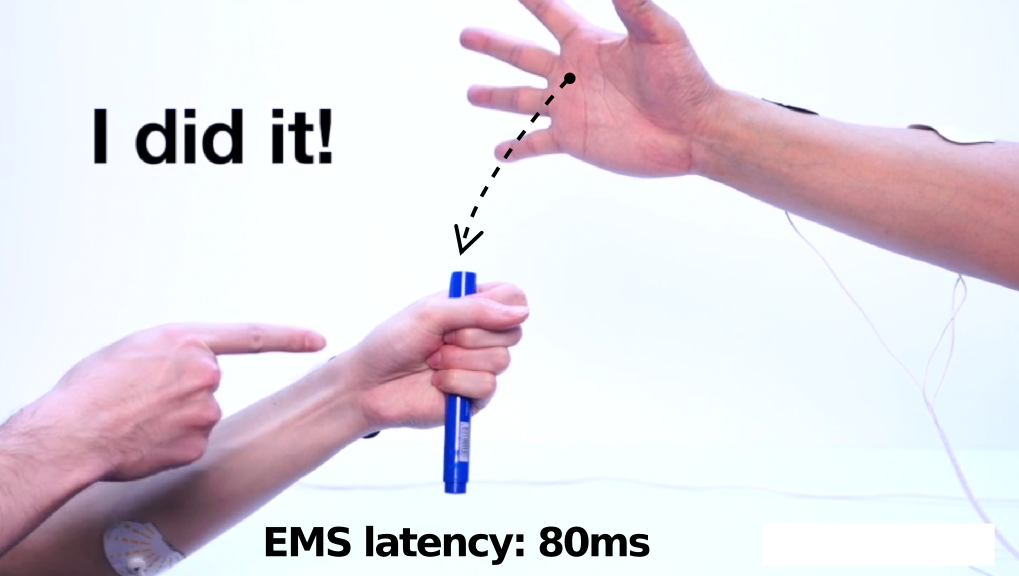

Shunichi Kasahara, Kazuma Takada, Jun Nishida, Kazuhisa Shibata, Shinsuke Shimojo, Pedro Lopes. In Proc. CHI'21.

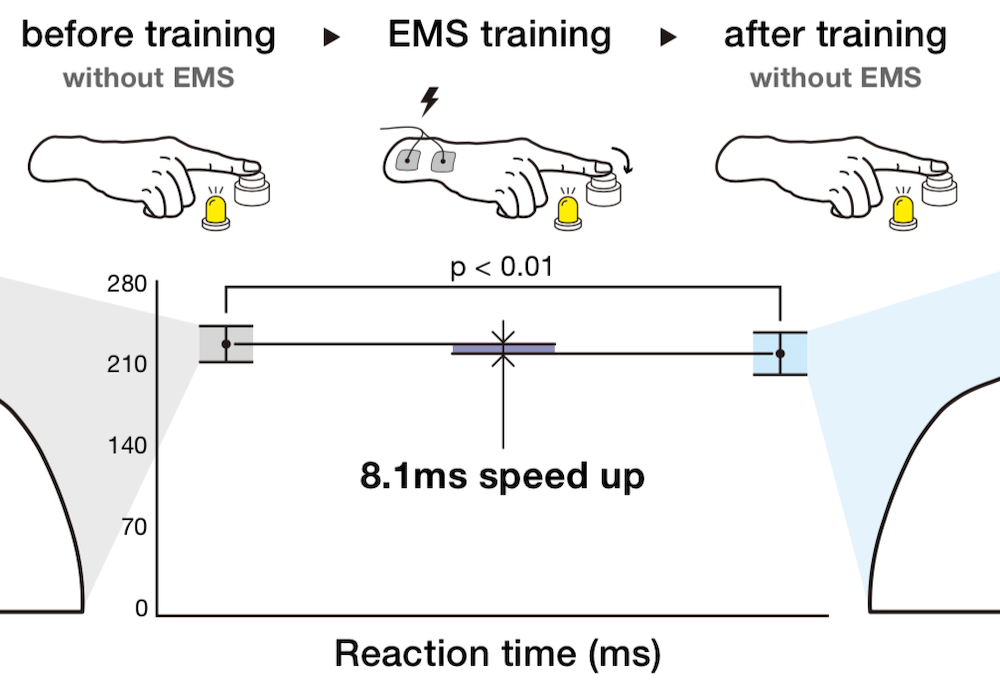

We found out that after training for reaction time tasks with electrical muscle stimulation (EMS), one's reaction time is acceleerated even after you remove the EMS device. What is remarkable is that the key to the optimal speedup is not applying EMS as soon as possible (traditional view on EMS stimulus timing) but to delay the EMS stimulus closer to the user's own reaction time, but still deliver the stimulus faster than humanly possible; this preserves some of user's sense of agency while still accelerating them to superhuman speeds (we call this Preemptive Action). This was done in cooperation with our colleagues from Sony CSL, RIKEN Center for Brain Science, and CalTech. Read more at our project's page.

Jun Nishida, S. Matsuda, H. Matsui, Shan-Yuan Teng, Zoe Liu, K. Suzuki, Pedro Lopes. In Proc. UIST'20

UIST'20 best paper award, top 1%

We engineered HandMorph, an exoskeleton that approximates the experience of having a smaller grasping range. It uses mechanical links to transmit motion from the wearer’s fingers to a smaller hand with five anatomically correct fingers. The result is that HandMorph miniaturizes a wearer’s grasping range while transmitting haptic feedback. Unlike other size-illusions based on virtual reality, HandMorph achieves this in the user’s real environment, preserving the user’s physical and social contexts. As such, our device can be integrated into the user’s workflow, e.g., to allow product designers to momentarily change their grasping range into that of a child while evaluating a toy prototype.

Jas Brooks, Steven Nagels, Pedro Lopes. In Proc. CHI'20

CHI'20 best paper award, top 1%

We explore a temperature illusion that uses low-powered electronics and enables the miniaturization of simple warm and cool sensations. Our illusion relies on the properties of certain scents, such as the coolness of mint or hotness of peppers. These odors trigger not only the olfactory bulb, but also the nose’s trigeminal nerve, which has receptors that respond to both temperature and chemicals. To exploit this, we engineered a wearable device that emits up to three custom-made “thermal” scents directly to the user’s nose. Breathing in these scents causes the user to feel warmer or cooler.

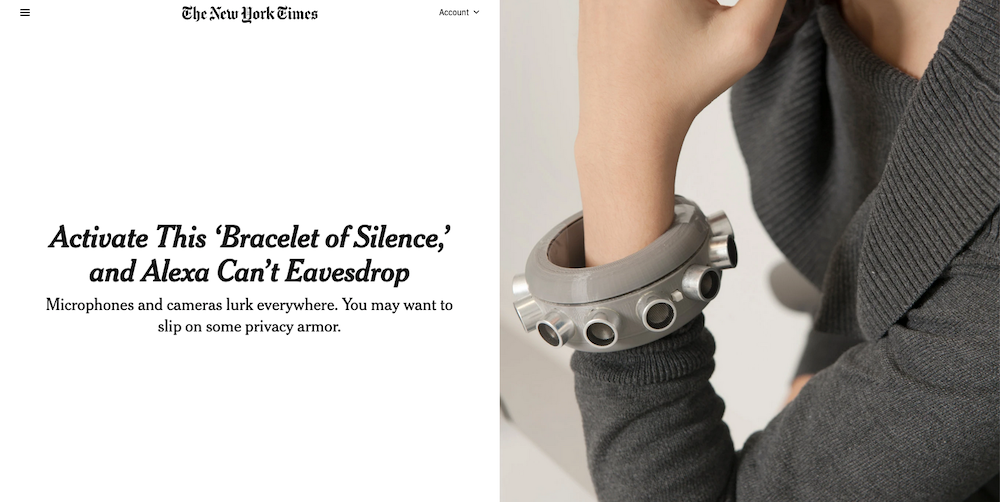

Yuxin Chen*, Huiying Li∗, Shan-Yuan Teng∗, Steven Nagels, Zhijing Li, Pedro Lopes, Ben Y. Zhao, Haitao Zheng. In Proc. CHI'20

* authors contributted equally CHI'21 honorable mention award, top 5%

We engineered a wearable microphone jammer that is capable of disabling microphones in its user’s surroundings, including hidden microphones. Our device is based on a recent exploit that leverages the fact that when exposed to ultrasonic noise, commodity microphones will leak the noise into the audible range. Our jammer is more efficient than stationary jammers. This is work was a colaboration led by Heather Zheng (who runs the SAND Lab) at UChicago.

Floyd Mueller* ,Pedro Lopes*, Paul Strohmeier, Wendy Ju, Caitlyn Seim, Martin Weigel, Suranga Nanayakkara, Marianna Obrist, Zhuying Li, Joseph Delfa, Jun Nishida, Elizabeth Gerber, Dag Svanaes, Jonathan Grudin, Stefan Greuter, Kai Kunze, Thomas Erickson, Steven Greenspan, Masahiko Inami, Joe Marshall, Harald Reiterer, Katrin Wolf, Jochen Meyer, Thecla Schiphorst, Dakuo Wang, Pattie Maes. In Proc. CHI’20 * authors contributted equally. In Proc. CHI'20 * authors contributted equally

Human-computer integration (HInt) is an emerging paradigm in which computational and human systems are closely interwoven; with rapid technological advancements and growing implications, it is critical to identify an agenda for future research in HInt.

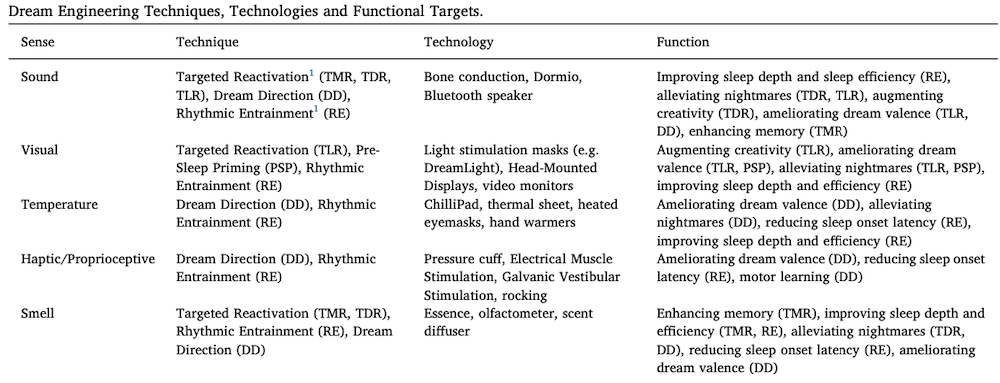

Michelle Carr, Adam Haar*, Judith Amores*, Pedro Lopes*, Guillermo Bernal, Tomás Vega, Oscar Rosello, Abhinandan Jain, Pattie Maes. In Proc. Consciousness and Cognition (Vol. 83, 2020) (journal paper) * authors contributted equally

We draw a parallel between recent VR haptic/sensory devices to further stimulate more senses for virtual interactions and the work of sleep/dream researchers, who are exploring how senses are intregrated and influence the sleeping mind. We survey recent developments in HCI technologies and analyze which might provide a useful hardware platform to manipulate dream content by sensory manipulation, i.e., to engineer dreams. This work was led by Michelle Carr (University of Rochester) and in collaboration with the Fluid Interfaces group (MIT Media Lab).

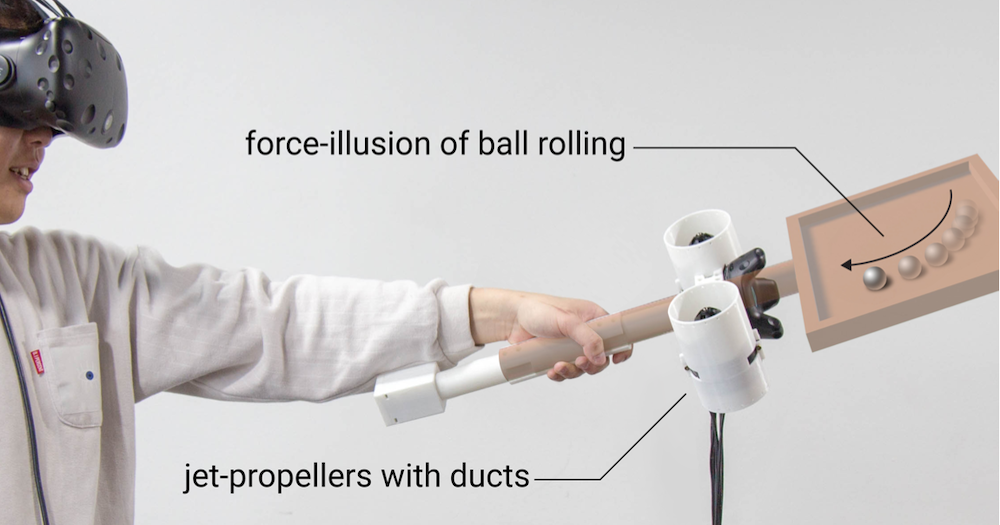

Seungwoo Je, Myung Jin Kim, Woojin Lee, Byungjoo Lee, Xing-Dong Yang, Pedro Lopes, Andrea Bianchi. In Proc. UIST’19.

We engineered Aero-plane, a force-feedback handheld controller based on two miniature jet-propellers that can render shifting weights of up to 14 N within 0.3 seconds. Unlike other ungrounded haptic devices, our prototype realistically simulates weight changes over 2D surfaces. This work was a collaboration and was led by Andrea Bianchi, who runs the MAKinteract group at KAIST.

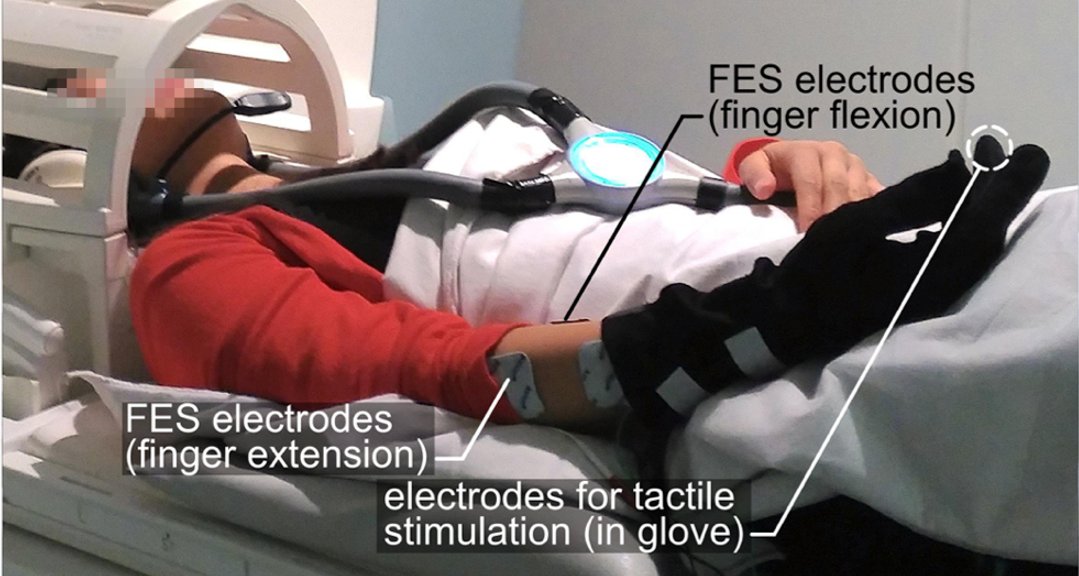

Jakub Limanowski, Pedro Lopes, Janis Keck, Patrick Baudisch, Karl Friston, and Felix Blankenburg

Journal article at Cerebral Cortex, to appear.

Tactile input generated by one’s own agency is generally attenuated. Conversely, externally caused tactile input is enhanced; e.g., during haptic exploration. We used functional magnetic resonance imaging (fMRI) to understand how the brain accomplishes this weighting. Our results suggest an agency-dependent somatosensory processing in the parietal operculum.

Shunichi Kasahara, Jun Nishida and Pedro Lopes. In Proc. CHI’19 and Demo at SIGGRAPH eTech'19

We found out that it is possible to optimize the timing of haptic systems to accelerate human reaction time without fully compromising the user' sense of agency.

Lukas Gehrke, Sezen Akman, Albert Chen, Pedro Lopes, ..., Klaus Gramann. In Proc. CHI’19.

We detected conflicts in visuo-haptic integration by analyzing event-related potentials (ERP) during interaction with virtual objects. In our EEG study, participants touched virtual objects and received either no haptic feedback, vibration, or vibration and EMS. We found that the early negativity component (prediction error) was more pronounced in unrealistic VR situations, indicating we successfully detected haptic conflicts.

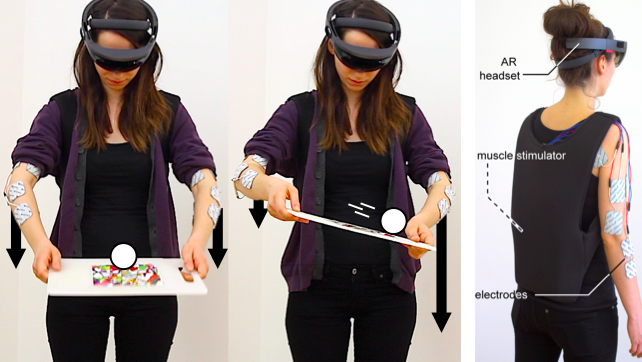

Pedro Lopes, Sijing You, Alexandra Ion, and Patrick Baudisch. In Proc. CHI’18

We present a mobile system that enhances mixed reality experiences, displayed on a Microsoft HoloLens, with force feedback by means of electrical muscle stimulation (EMS). The benefit of our approach is that it adds physical forces while keeping the users’ hands free to interact unencumbered—not only with virtual objects, but also with physical objects, such as props and appliances that are an integral part of both virtual and real worlds. [more soon]

Robert Kovacs, Alexandra Ion, Pedro Lopes, Tim Oesterreich, Johannes Filter, Philip Otto, Tobias Arndt, Nico Ring, Melvin Witte, Anton Synytsia, and Patrick Baudisch. In Proc. UIST’18.

TrussFormer is an integrated end-to-end system that allows users to 3D print large-scale kinetic structures, i.e., structures that involve motion and deal with dynamic forces. TrussFormer incorporates linear actuators into these rigid truss structures in a way that they move “organically”, i.e., hinge around multiple points at the same time. [Read more]

This is work I did in collaboration with Robert Kovacs (who is the principal investigator on this topic).

Alexandra Ion, Robert Kovacs, Oliver Schneider, Pedro Lopes, Patrick Baudisch. In Proc. CHI’18

We enable 3D printed objects to display different textures by transforming their surface. We demonstrate several textured 3D objects, including a shoe sole that transforms from flat to treaded, a textured door handle providing tactile feedback to visually impaired users, and a configurable bicycle grip. [more soon]

This is work I did in collaboration with Alexandra Ion (who is the principal investigator on this topic).

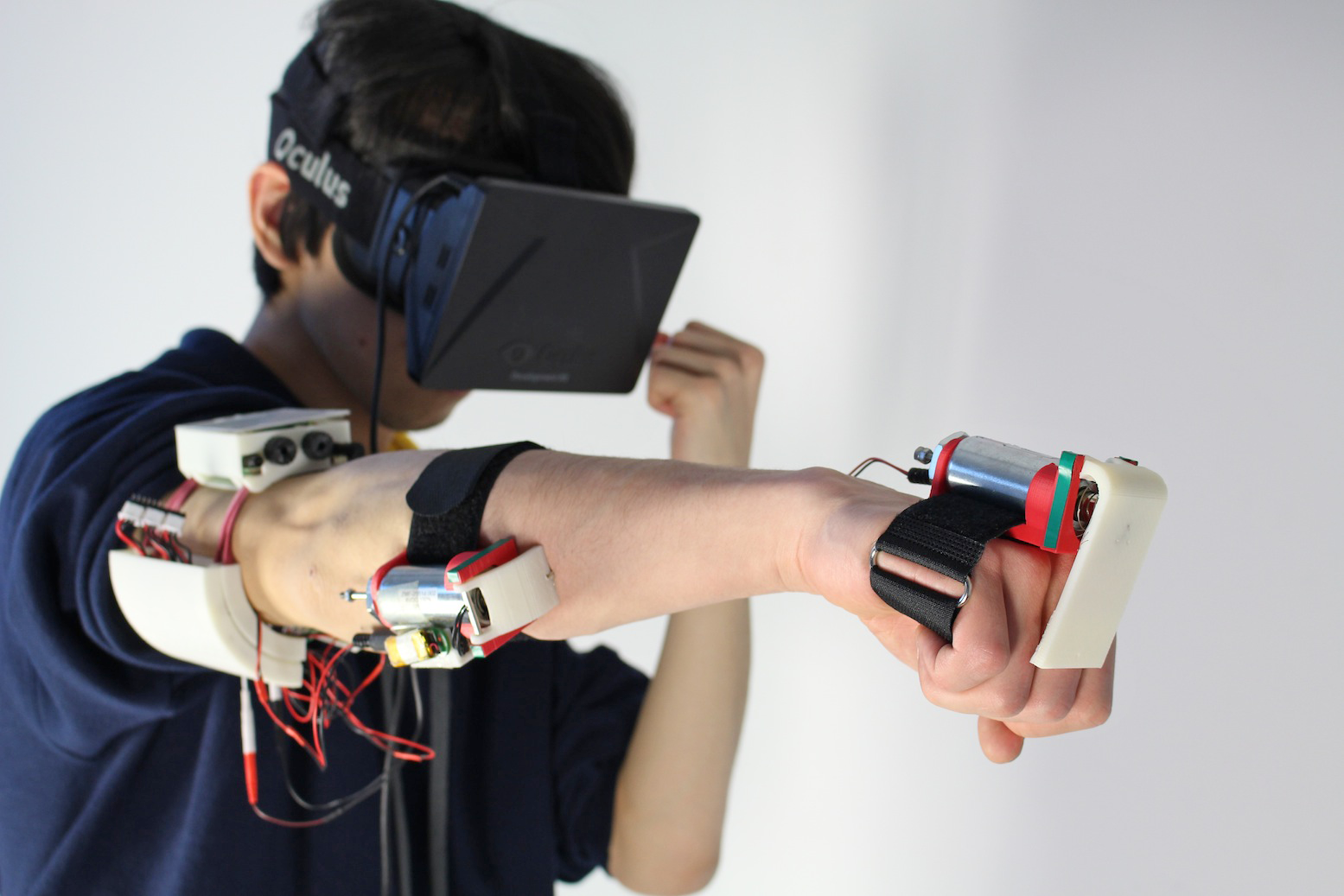

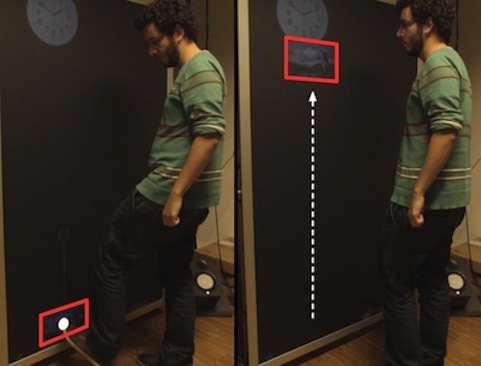

Pedro Lopes, Sijing You, Lung-Pan Cheng, Sebastian Marwecki and Patrick Baudisch. In Proc. CHI’17 & demo at SIGGRAPH'17.

We explored how to add haptics to walls and other heavy objects in virtual reality. Our contribution is that we prevent the user’s hands from penetrating virtual objects by means of electrical muscle stimulation (EMS). As the shown user lifts a virtual cube, our system lets the user feel the weight and resistance of the cube. The heavier the cube and the harder the user presses the cube, the stronger a counterforce the system generates.

Pedro Lopes, Doga Yueksel, François Guimbretière, and Patrick Baudisch. In Proc. UIST’16.

With muscle-plotter we explore how to create more expressive EMS-based systems. Muscle-plotter achieves this by persisting EMS output, allowing the system to build up a larger whole. More specifically, muscle-plotter spreads out the 1D signal produced by EMS over a 2D surface by steering the user’s wrist, while the user drags their hand across the surface. Rather than repeatedly updating a single value, this renders many values into curves.

Alexandra Ion, Johannes Frohnhofen, Ludwig Wall, Robert Kovacs, Mirela Alistar, Jack Lindsay, Pedro Lopes, Hsiang-Ting Chen, and Patrick Baudisch. In Proc. UIST’16

UIST'16 Honourable Mention, top 5%.

So far, metamaterials were understood as materials—we want to think of them as machines. We demonstrate metamaterial objects that perform a mechanical function. Such metamaterial mechanisms consist of a single block of material the cells of which play together in a well-defined way in order to achieve macroscopic movement. Our metamaterial door latch, for example, transforms the rotary movement of its handle into a linear motion of the latch. [more]

This is work I did in collaboration with Alexandra Ion (who is the principal investigator on this topic).

Pedro Lopes, Alexandra Ion, and Patrick Baudisch. In Proc. UIST'15

UIST'15 best talk nomination

We present impacto, a device designed to render the haptic sensation of hitting and being hit in virtual reality. The key idea that allows the small and light impacto device to simulate a strong hit is that it decomposes the stimulus: it renders the tactile aspect of being hit by tapping the skin using a solenoid; it adds impulse to the hit by thrusting the user’s arm backwards using electrical muscle stimulation. The device is self-contained, wireless, and small enough for wearable use.

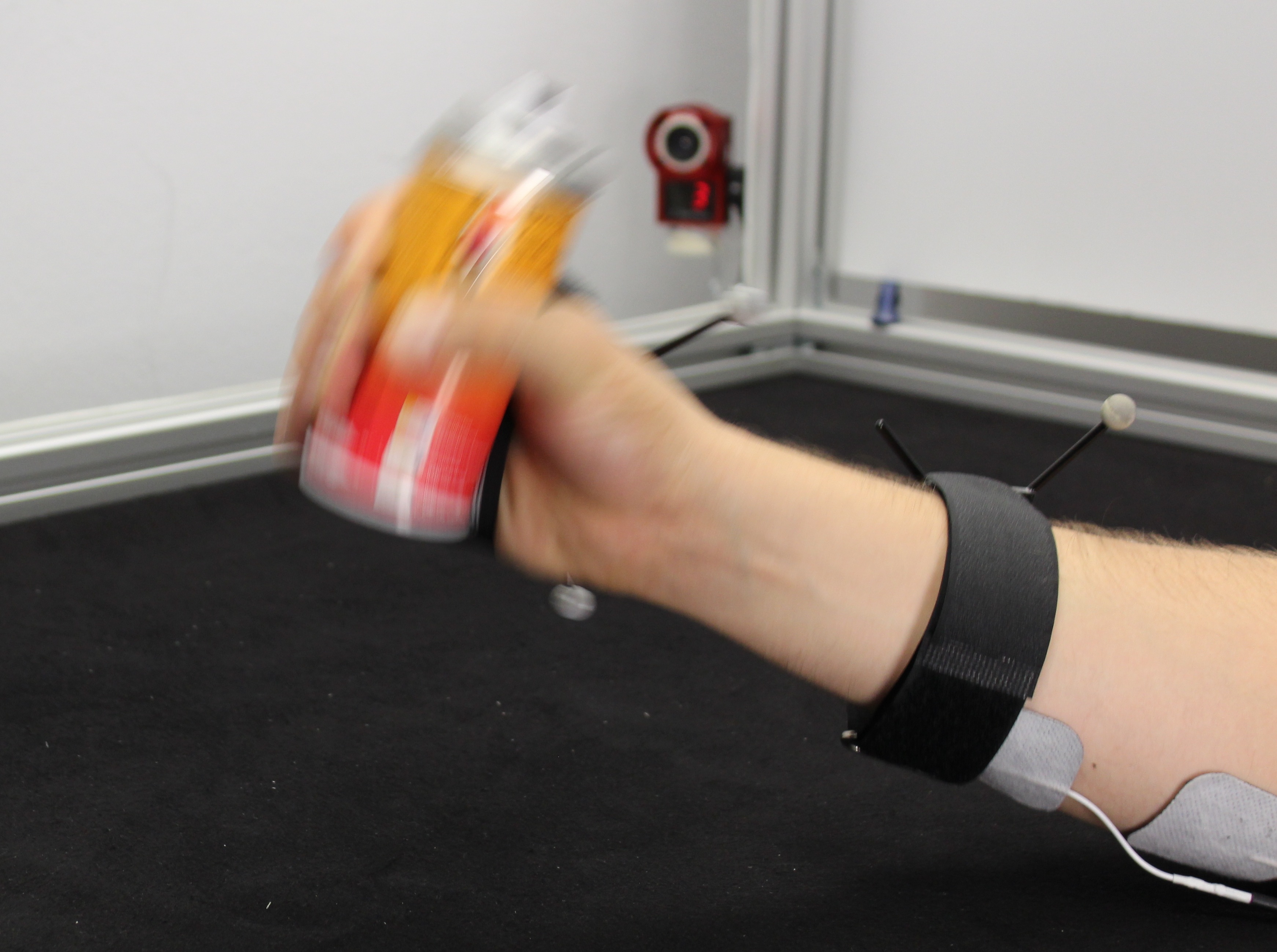

Pedro Lopes, Patrik Jonell, and Patrick Baudisch. In Proc. CHI’15

CHI'15 best paper award, top 1%

We propose extending the affordance of objects by allowing them to communicate dynamic use, such as (1) motion (e.g., spray can shakes when touched), (2) multi-step processes (e.g., spray can sprays only after shaking), and (3) behaviors that change over time (e.g., empty spray can does not allow spraying anymore). Rather than enhancing objects directly, however, we implement this concept by enhancing the user. We call this affordance++. By stimulating the user’s arms using electrical muscle stimulation, our prototype allows objects not only to make the user actuate them, but also perform required movements while merely approaching the object, such as not to touch objects that do not “want” to be touched.

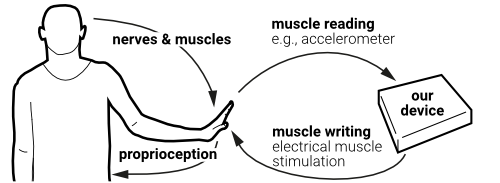

Pedro Lopes, Alexandra Ion, Willi Mueller, Daniel Hoffmann, Patrik Jonell, and Patrick Baudisch. In Proc. CHI’15.

CHI'15 best talk award

We propose a new way of eyes-free interaction for wearables. It is based on the user’s proprioceptive sense, i.e., rather than seeing, hearing, or feeling an outside stimulus, users feel the pose of their own body. We have implemented a wearable device called Pose-IO that offers input and output based on proprioception. Users communicate with Pose-IO through the pose of their wrists. Users enter information by performing an input gesture by flexing their wrist, which the device senses using a 3-axis accelerometer. Users receive output from Pose-IO by finding their wrist posed in an output gesture, which Pose-IO actuates using electrical muscle stimulation.

This core concept paved the second line of research in my PhD, in which the interactive system based on EMS allows users to access information (continued in Muscle Plotter and Affordance++).

Lung-Pan Cheng, Patrick Lühne, Pedro Lopes, Christoph Sterz, and Patrick Baudisch. In Proc. CHI’14

We present haptic turk, a different approach to motion platforms that is light and mobile. The key idea is to replace motors and mechanical components with humans. All haptic turk setups consist of a player who is supported by one or more human-actuators. The player enjoys an interactive experience, such as a flight simulation. The motion in the player’s experience is generated by the actuators who manually lift, tilt, and push the player's limbs or torso.

The idea for this line of research (primary investigator is Lung-Pan Cheng) was born out of discussions on interactive devices based on EMS. In fact, one of the possible form factors for an EMS device is to apply the stimuli to a surrogate user, who in turn actuates the user. Interestingly, we realized that, instead of using EMS, a set of real-time actuation instructions achieves the same effect.

Ricardo Jota, Pedro Lopes, Daniel Wigdor, and Joaquim Jorge. In Proc. CHI’14

We explore the design space of foot input on vertical surfaces, and propose three distinct interaction modalities: hand, foot tapping, and foot gesturing. We dinstinguish between feet and hands by feeding the sound of surface contacts (captured by a microphone) to a trainned machine learning classifier.

This the second of a line of work (together with Ricardo Jota) using machine learning and acoustic features to distuinguish which part of the user's body (e.g., feet vs. hands) contacts an interactive surface (see also my "Augmenting touch through acoustic sensing" paper).

Anne Roudaut, Andreas Rau, Christoph Sterz, Max Plauth, Pedro Lopes, and Patrick Baudisch. In Proc. CHI’13

Behind gesture output stands the idea to use spatial gestures not only for input but also for output. Analogous to gesture input, gesture output moves the user’s finger in a gesture, which the user then recognizes. A motion path forming a “5”, for example, may inform the user about five unread messages; a heart-shaped path may serve as a message from a close friend.

This idea that one could the exact same gestural vocabulary for input and ouput (which we called symmetric interaction) inspired my work in "Proprioceptive Interaction" (CHI'15), in which we applied this idea to a device that overlaps with the human body.

Pedro Lopes, and Patrick Baudisch. In Proc. CHI’13

IEEE World Haptics, People’s Choice Nomination for Best Demo

Force feedback devices resist miniaturization, because they require physical motors and mechanics. We propose mobile force feedback by eliminating motors and instead actuating the user’s muscles using electrical stimulation. Without the motors, we obtain substantially smaller and more energy-efficient devices. Our prototype fits on the back of a mobile phone. It actuates users’ forearm muscles via four electrodes, which causes users’ muscles to contract involuntarily, so that they tilt the device sideways. As users resist this motion using their other arm, they perceive force feedback.

This was the core project that initiated my line of work in increasing realism (continued in Impacto and VR walls).

Stefanie Mueller, Pedro Lopes, and Patrick Baudisch. In Proc. UIST’12

Constructable is an interactive drafting table that produces precise physical output in every step. Users interact by drafting directly on the workpiece using a hand-held laser pointer. The system tracks the pointer, beautifies its path, and implements its effect by cutting the workpiece using a fast high-powered laser cutter. [more]

This is work I did in collaboration with Stefanie Mueller (who is the principal investigator on this topic).

Art projects from my research on EMS

A side effect of devices interacting directly through the user’s muscles is that they question the traditional model of users controlling devices. This topic stimulated a lot of discussion in the lab.

One of the results of this debate was the creation of a prototypical device that reverses this paradigm and is controlling the user. Our creation, shown below, is a technological parasite that lives off human energy. The device harvests kinetic energy by electrically stimulating participants’ wrists. This causes their wrists to involuntarily turn a crank, which powers the device.

We felt the debate and thus the device were of interest to the general public, but we also felt that its nature of asking a question (rather than giving an answer) made it more suitable for an artistic outlet. We have exhibited this device as an installation at prestigious venues such as the Ars Electronica (Linz, 2017) and Science Gallery (Dublin, 2017).

Pedro Lopes, Alexandra Ion, Robert Kovacs, David Lindlbauer and Patrick Baudisch

Exhibited at Ars Electronica'17, World Economic Forum (San Francisco) and Science Gallery Dublin

Ad infinitum is a parasitical entity which lives off human energy. It lives untethered and off the grid. This parasite reverses the dominant role that mankind has with respect to technologies: the parasite shifts humans from “users” to “used”. Ad infinitum parasitically attaches electrodes onto the human visitors and harvesting their kinetic energy by electrically persuading them to move their muscles using EMS. [more]

This piece is a critical take on the canonical HCI configuration, in which a human is always in control. Instead, here participants experience how it feels when a machine is in control.

Pedro Lopes (and supporting musicians)

Exhibited at Print Screen Festival (Tel Aviv) and Disruption Network Lab (curated by Tatiana Bazzichelli)

Conductive-ensemble is an art performance in which the musicians are controlled by the audience. On stage, a quartet of musicians is ready to “play”. To interact: the audience members open up their browser and navigate to the performance’s website. Control: when tapping on a musician’s name on their screen, this website sends a stream of electrical muscle stimulation to that musician’s muscles; causing the musician to play "against their own will".

Art Exhibitions

- Ad Infinitum, Ars Electronica’17, Linz

- Ad Infinitum, World Economic Forum, San Francisco

- Ad Infinitum, Science Gallery Dublin

- Conductive Ensemble, Print Screen Festival, Tel Aviv)

- Conductive Ensemble, Disruption Network Lab, SPEKTRUM

- Ad Infinitum, Natural History Museum, Bern

- Affordance++ installation, Laznia Centre for Contemporary Art, Gdansk

Magazine Articles with overarching vision

I've published two overarching articles at IEEE magazines that provide a deeper discussion of my research & vision for interactive devices tht overlap with the user's body. These two articles discuss how interactive systems based on EMS fit this vision.

Pedro Lopes and Patrick Baudisch

Magazine article at IEEE Computer, vol 50, no.10, 2017.

Pedro Lopes and Patrick Baudisch

Magazine article at IEEE Pervasive, vol. 16 Issue No. 03, 2017.

Additional publications in Human-Computer Interaction

- 9. Towards understanding the design of bodily integration, Florian Mueller, Pedro Lopes, Josh Andres; Richard Byrne, Nathan Semertzidis, Zhuying Li, Jarrod Knibbe, Stefan Greuter, In Int. Journal of Human-Computer Studies. 2021. paper

- 8. Accelerating Skill Acquisition of Two-Handed Drumming using Pneumatic Artificial Muscles, Takashi Goto, Swagata Das, Pedro Lopes, Yuichi Kurita and Kai Kunze, In Proc. Augmented Human’20. paper

- 7. Interacting with Wearable Computers by means of Functional Electrical Muscle Stimulation. Pedro Lopes and Patrick Baudisch. In Proc. NAT '17 (Neuroadaptive Technology, paper) Best Talk award.

- 6. I, the device: observing human aversion from an HCI perspective, Ricardo Jota, Pedro Lopes, and Joaquim Jorge. 2012. In Proc. CHI EA’12, pg. 261-270. (paper)

- 5. Augmenting touch interaction through acoustic sensing, Pedro Lopes, Ricardo Jota, Joaquim Jorge, In Proc. ITS’11 (Interactive Tabletops and Surfaces). (paper, video)

- 4. Combining bimanual manipulation and pen-based input for 3D modelling. Pedro Lopes, Daniel Mendes, Bruno de Araújo, Joaquim Jorge, SBIM’11: Sketch-based Interface and Modelling, in cooperation with ACM SIGGRAPH and EUROGRAPHICS (paper, video)

- 3. Hands-on interactive tabletop LEGO application. Daniel Mendes, Pedro Lopes, and Alfredo Ferreira, in Proc. ACE ’11: Proceedings of the 2010 ACM SIGCHI international conference on Advances in Computer Entertainment Technology (paper, video)

- 2. Battle of the DJs: an HCI Perspective of Traditional, Virtual, Hybrid and Multitouch DJing. Pedro Lopes, Alfredo Ferreira, João Pereira, In Proc. NIME’11 (New Interfaces for Musical Expression ) (paper, video)

- 1. Multitouch interactive DJing surface. Pedro Lopes, Alfredo Ferreira, João Pereira. In Proc. ACE’10 (Advances in Computer Entertainment ). (paper, video)

Academic Recognition Awards/Prizes (selected, see CV for details)

- Sloan Fellow 2022

- NSF CAREER award 2021

- 7x Best Paper Awards: CHI 2024, CHI 2023, UIST 2021, CHI 2021, UIST 2020, CHI 2020, CHI 2015

- 4x Best Demo Awards: UIST 2021 (2x), CHI 2021, CHI 2022

- 7x Honorable Mention Awards: UIST 2024, CHI 2024, UIST 2023, UIST 2022, CHI 2021, CHI 2020, UIST 2016

- 2x Honorable Mention Demo Awards: UIST 2024, UIST 2023

- 1x Best Talk award (CHI 2015—sadly this award does not exist anymore)

- 2x Best Talk nominations (CHI 2015, UIST 2015—sadly this award does not exist anymore)

Teaching

I teach a number of technical classes in Computer Science, with a focus on hardware and human-computer interaction. My teaching offerings can be found here. Moreover, I often teach workshops at scientific venues, summer courses and guest lectures outside of UChicago, more info on these can be found in my CV.

Conference organizing (selected, see CV for details)

- 6. Technical Program Committee (TPC) Chair for ACM CHI 2026

- 6. Program Chair (papers chair) for ACM UIST 2024

- 5. Program Chair (papers chair) for EuroHaptics 2024

- 4. Program Chair (papers chair) for ACM SUI 2023

- 3. General chair for ACM AH’2021(Augmented Humans)

- 2. Interactivity (Demo) Chair for ACM CHI’21

- 1. Student Innovation Contest Chair for ACM UIST’16 (also provided our open-source EMS hardware to 18 teams in this contest)

Program Committees (selected, see CV for details)

- 20.Program Committee Member for ACM CHI’23

- 19.Program Committee Member for ACM UIST’22

- 18.Program Committee Member for ACM CHI’22

- 17.Best Paper Committee for IEEE World Haptics 2021

- 16.Program Committee Member for ACM UIST’21

- 15.Program Committee Member for IEEE WHC’21

- 14.Program Committee Member for IEEE VR’21

- 13.Program Committee Member for ACM ISWC’21

- 12.Program Committee Member for ACM UIST’20

- 11.Program Committee Member for ACM CHI’20

- 10.Program Committee for ACM Symposium on Applied Perception’20

- 9. Program Committee Member for CHI’20 Interactivity

- 8. Program Committee Member for ACM CHI’19

- 7. Program Committee Member for ACM IUI’19

- 6. Program Committee Member for IEEE VR’19

- 5. Program Committee Member for ACM Augmented Human’19

- 4. Program Committee Member for ACM UIST’18

- 3. Program Committee Member for ACM CHI’18

- 2. Program Committee Member for IEEE VR’18

- 1. Program Committee Member for ACM UIST’17